How AI assistants are shaping gender bias in technology

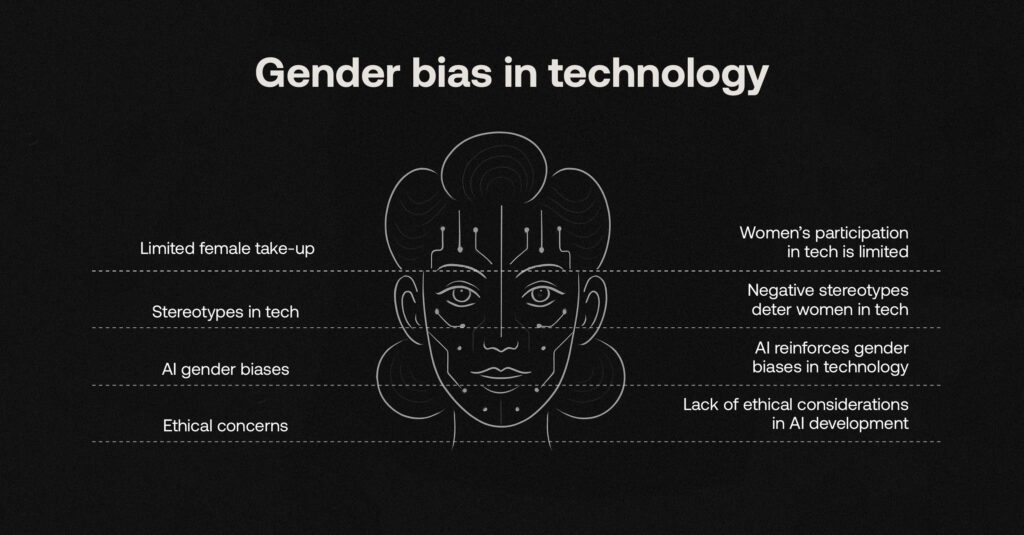

AI assistants are designed to make our lives easier, but they are also shaping how we interact with technology in subtle ways. As conversations around gender bias in technology continue to grow, questions are being raised about why many of these assistants default to female voices and what that signals about the roles they are designed to play.

Across the UK and Europe, this topic is gaining more attention, particularly as frameworks like the EU AI Act begin to place greater focus on how AI systems are designed, trained and deployed. It’s prompting a closer look at the assumptions built into everyday tools that we often take for granted. This shift reflects a broader effort to better understand and address gender bias in technology at both a regulatory and design level.

This increased scrutiny is placing a spotlight on the technology industry and the culture and perceptions that shape it. As a technology company that champions the careers and growth of all our employees, RelyComply is highly aware of the stereotypes that shape our industry: a largely inaccessible world with computer science niches made famous by competitive, often controversial, dominant male figures portrayed in everyday news or popular culture (The Social Network, as a prime example).

Table of Contents

Real-world impact of gender bias in technology

These perceptions are not without consequence. According to research by PwC, only 27% of female students would consider a career in technology, compared to 61% of males, with just 3% of female students saying it is their first choice. This highlights the broader issue of gender bias in technology, which may be reinforced by AI assistants such as Siri and Alexa presenting women as passive voices, albeit designed as well-intentioned ‘friendly helpers’.

As AI usage continues to grow, these challenges are not only reinforced by the technology itself, but also by the platforms that deploy it every day. AI systems need to operate as active and responsible participants in decision-making, supporting fair outcomes regardless of individual identifiers. This applies across every sector where AI plays a critical role, and platforms must balance human-like intelligence with strong ethical oversight to ensure fairness prevails.

Is AI reshaping what we believe to be true about the world and identity?

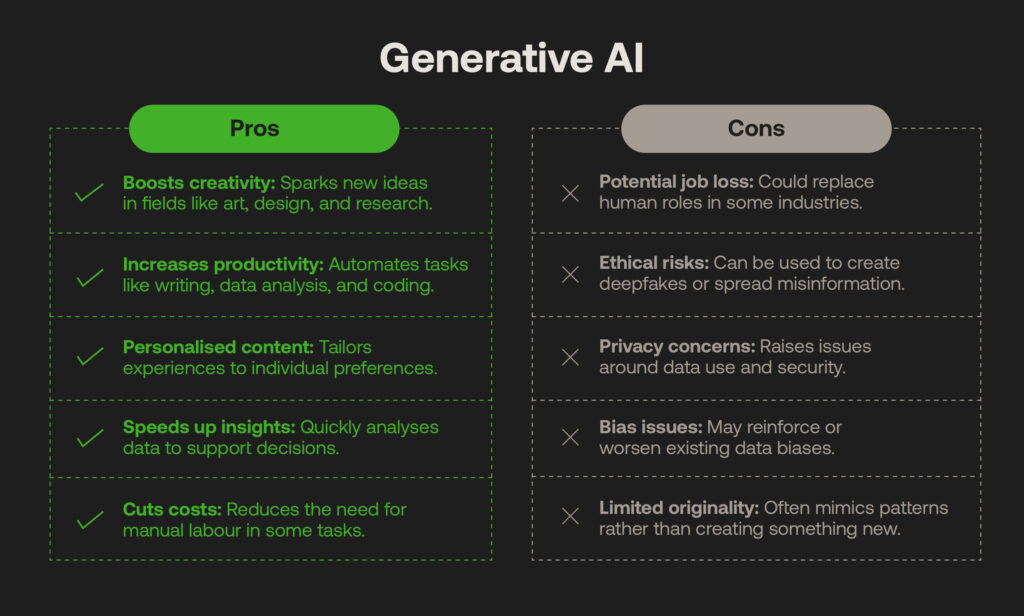

There have been several emerging concerns around the rapid growth of Generative AI (GenAI). A common theme is the technology’s time-saving effectiveness, which is increasingly leading to the perception of ‘replacing’ job roles rather than easing repetitive, manual tasks as originally intended. It risks creating an I, Robot-style ‘man vs machine’ mentality, which, while an extreme case, still takes away from AI’s more considered, life-changing applications, such as spotting diseases early, managing energy efficiency, or discovering criminal behaviour hiding in plain sight.

We cannot avoid controversial GenAI use cases. Deepfake technology challenges our understanding of identity and truth, enabling the spread of harmful content online or allowing attempts to gain unauthorised access to accounts by using synthetic media to deceive biometric authentication systems. The loss of autonomy over our identities, gendered or otherwise, is a significant concern; Denmark has recently led the vanguard in ensuring everyone’s right to “their own body, facial features and voice” to combat this crime.

“Human beings can be run through the digital copy machine and be misused for all sorts of purposes, and I’m not willing to accept that.” – Danish culture minister, Jakob Engel-Schmidt

Standards for utilising AI are gaining increased attention at a government level globally. There is growing scrutiny around how AI systems are trained and applied, particularly in areas where they are used to detect risk and anomalies, where bias can still influence outcomes. This level of scrutiny is key to addressing gender bias in technology, as the data used to train these systems can directly influence how individuals are assessed. AI outputs will often reflect and reinforce these patterns if they are built on historical datasets that carry inherent human biases.

How ‘supportive’ AI design can reinforce gender bias in technology

The association of obedience linked to the female voice is an enduring problem. The UN notes how popular language models can associate certain ‘service role’ job titles with gender, such as women with “nurses” and men with “scientists.” There’s also a documented link between gendered AI voice assistants and user behaviour. A study by Johns Hopkins engineers found evidence of underlying bias towards ‘supportive’ feminine voices, while gender neutral assistants experienced fewer hostile interactions or interruptions when errors were introduced, often from male participants. This suggests that some AI systems, particularly voice assistants, are designed to mirror user expectations by responding in ways that feel helpful and compliant. When these behaviours are paired with gendered voices, they can reinforce the idea that women are more suited to supportive, obedient roles.

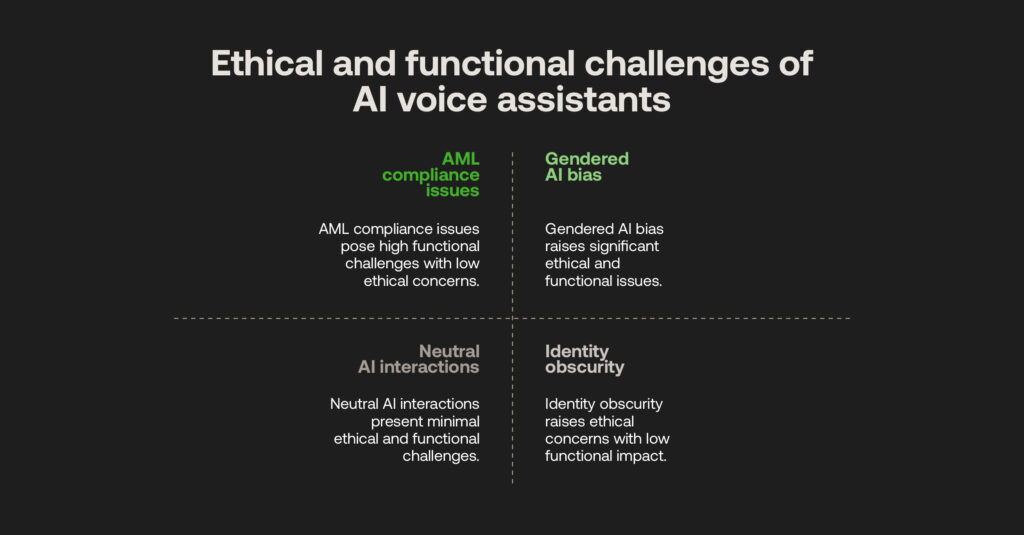

This sets a concerning precedent for systems, as they are not inherently designed to question or challenge human decision-making without deliberate oversight or intervention. This is particularly relevant in the financial world. ‘Silent’ AML compliance platforms will operate according to predefined rules and thresholds, which may result in critical risks being overlooked. User-based risk thresholds can reflect historical biases within datasets, particularly if they are not representative of a diverse compliance perspective, and AI models can be trained on algorithms that unintentionally reinforce existing biases within them. A lack of transparency around how anti-financial crime systems store and use customer data within their AI processes can also make it difficult for users to understand how their data is used, how decisions are made, or whether decisions made about them are fair and free from bias.

“Thoughtful design – especially in how these agents portray gender – is essential to ensure effective user support without promoting harmful stereotypes. Addressing these biases in voice assistance and AI will ultimately help us create a more equitable digital and social environment.” – Amama Mahmood (John Hopkins study)

Technologies must therefore be built with appropriate human oversight. Considerations made during the design and deployment of AI systems extend directly to end users and real-world outcomes. In financial technology’s case, this could include lending platforms that reinforce bias across different professions or backgrounds when assessing loan applications, or chatbots that rely on generalised responses when explaining decisions, which may reflect biased assumptions or overlook individual circumstances. Addressing these issues is key to reducing gender bias in technology, ensuring systems provide clearer, more context-aware responses and fairer outcomes for all users.

How human oversight and AI can reduce bias and drive societal change

Automated AI technology can be a true force for good in the right hands, particularly when guided by ethical frameworks and supported by regulation. When developments continue regarding how women are perceived as ‘helpers,’ AI platforms can become active participants rather than passive tools, designed to operate without bias and contribute to fairer outcomes alongside their human counterparts.

Modern RegTech aims to advance this approach by balancing AI and human-led processes in a transparent way that can be audited by customers and regulators alike. At RelyComply, we’ve ensured that there is accountability from both sides, utilising machine learning to identify and prioritise suspicious behaviours at a scale not possible through manual processes alone. This enables analysts to focus on higher risk alerts and supports more accurate detection of potential financial crime. We also employ ‘explainability’ techniques to demonstrate how our models are trained, including what data is used and how outcomes are generated.

When each of the model’s outputs and decisions is subject to review, this shows a shared commitment between human analysts and AI systems in the detection and investigation process; combining the platform’s analytical capability with human judgement and oversight. Both the technology and its users play a role in ensuring AML compliance is applied ethically, with customer data handled in line with privacy laws and decisions continuously evaluated to improve outcomes, creating a process that is collaborative and accountable, rather than one where systems simply execute instructions without question.

Our platform has been built to foster better decision-making through automation and industry-best AI accountability, where these growing considerations around cognitive bias are contributing to positive transformation across the technology sector. If regulation and legislation can help the financial industry change the AI narrative, forward-thinking platforms can play an even more effective role in crime detection while reducing the risk of reinforcing gendered stereotypes.

Technology is not going anywhere; it is crucial for societal progress. With ethical oversight becoming the norm, addressing broader forms of bias, including gender bias in technology, is a key step towards ensuring we’re heading in the right direction.